Why Your Clients Are Not Progressing

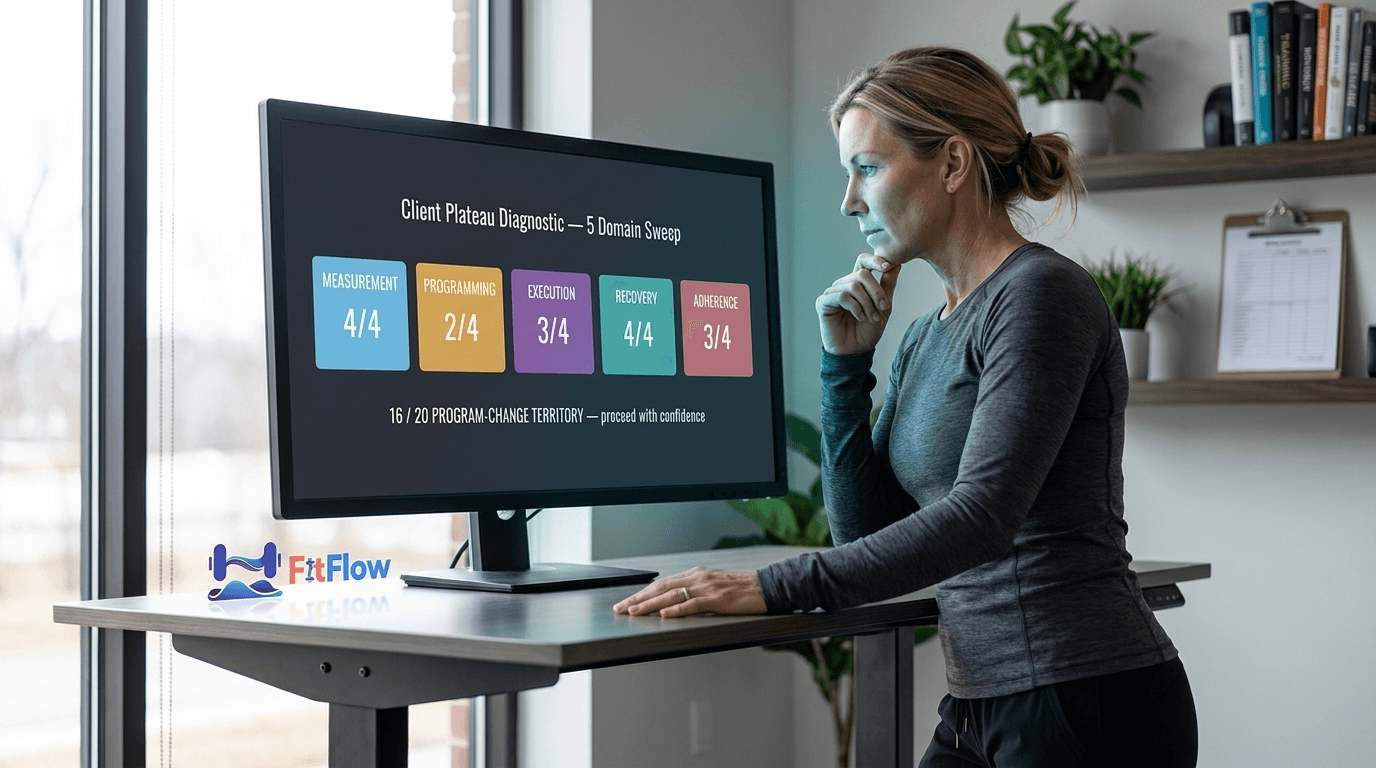

It is Thursday morning. You are looking at a roster of thirty-four active clients and a flagged list of eleven — roughly a third — whose logs have gone quiet on progress for three to four weeks. The instinct is immediate: eleven clients, eleven plateau conversations, eleven new program variants by Sunday. You open a blank template.

Stop. Run a ten-minute diagnostic on each of the eleven first. When we did this last month on a roster like yours, the eleven "plateaus" resolved differently: four were measurement gaps (the client was progressing — the data stream could not see it), three were adherence issues (session logs said ninety-two per cent; attendance records said sixty-one per cent), two were sleep and stress deficits compounding across the block, one was straightforward volume creep without a deload. Exactly one needed a new program. Ten of those eleven rewrites would have reset the adaptation clock on problems that did not live in the program.

A plateau is a symptom, not a diagnosis. Calling something a plateau tells you nothing about its cause, and the cause determines the fix. In most cases, a client's stall lives in one of four domains that have nothing to do with program design: Measurement, Execution, Recovery, or Adherence. Change the program before diagnosing those, and you reset a working adaptation cycle while leaving the actual failure untouched.

This is not contrarian for its own sake. Every major 2026 report points the same direction. The ACSM 2026 Worldwide Fitness Trends Report ranks wearable-and-AI-driven plateau prediction as the year's #1 technology signal, alongside ACSM's first resistance-training guideline update in seventeen years. The Trainerize 2026 State of the Personal Training Industry Report puts average client tenure at roughly ninety days and names progress visibility as the silent driver of churn. The Les Mills 2026 Global Fitness Report, surveying 10,442 gym-goers across five continents, found 30% have hit plateaus they cannot explain and 58% are confused by conflicting progression advice. On March 7, 2026, Barbell Medicine updated their public plateau guide with a seven-point lifter self-diagnostic — a tool with no trainer-facing equivalent at the same depth.

Four independent signals, one direction: plateau is a measurement and diagnostic problem, not a program-design problem. This post is the trainer-operated version of that insight.

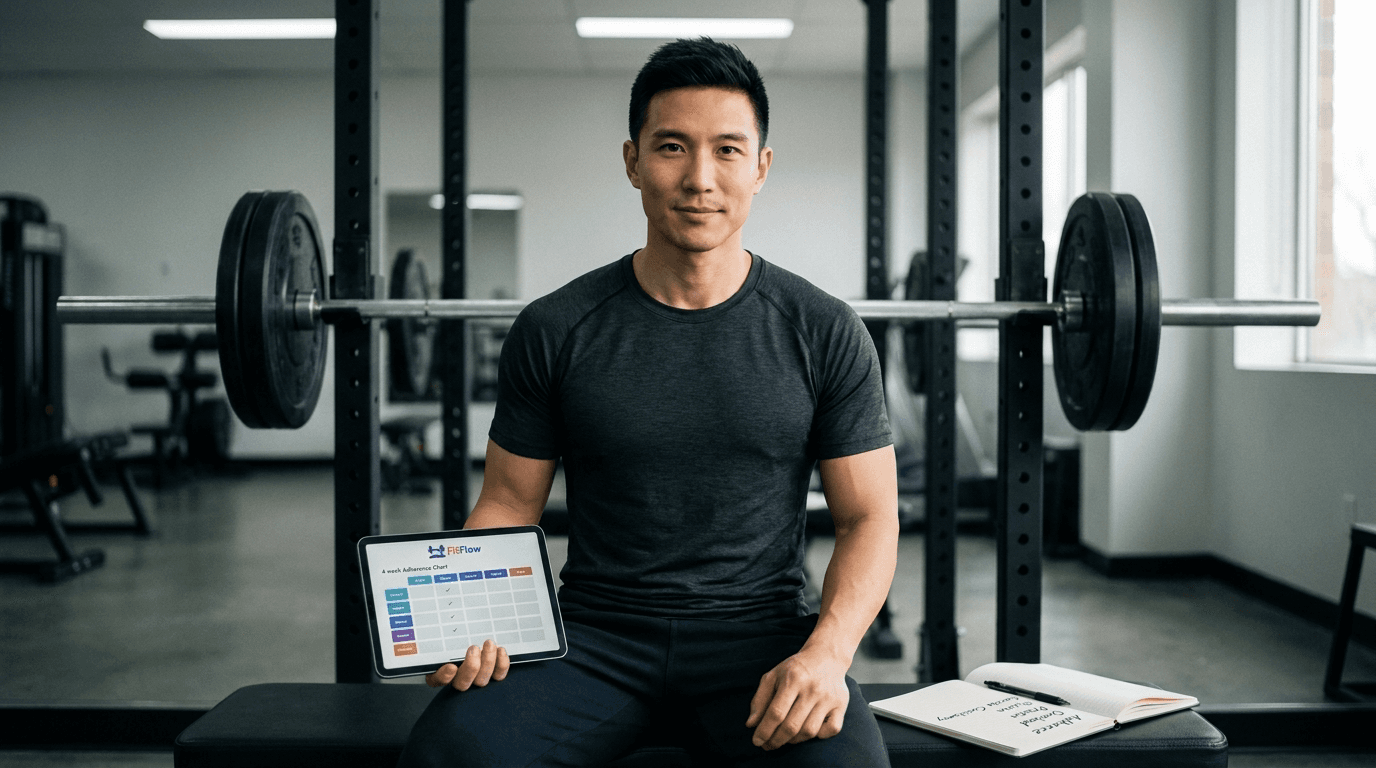

Download the Client Plateau Diagnostic Checklist — ten questions, five domains, scorable in under ten minutes per client. Flowchart, interpretation thresholds, and a client-facing in-session version included. Grab it at the end; it pairs directly with the framework below.

This is the upstream diagnostic — the tool you run on any plateaued client before deciding which deep-dive applies. For the mechanism-level treatment, see why progressive overload is misunderstood. It works whether you manage four clients or forty, and for the self-directed lifter six-to-eighteen months into training who cannot tell whether his own programming is the issue.

TL;DR — The 5-Domain Plateau Diagnostic

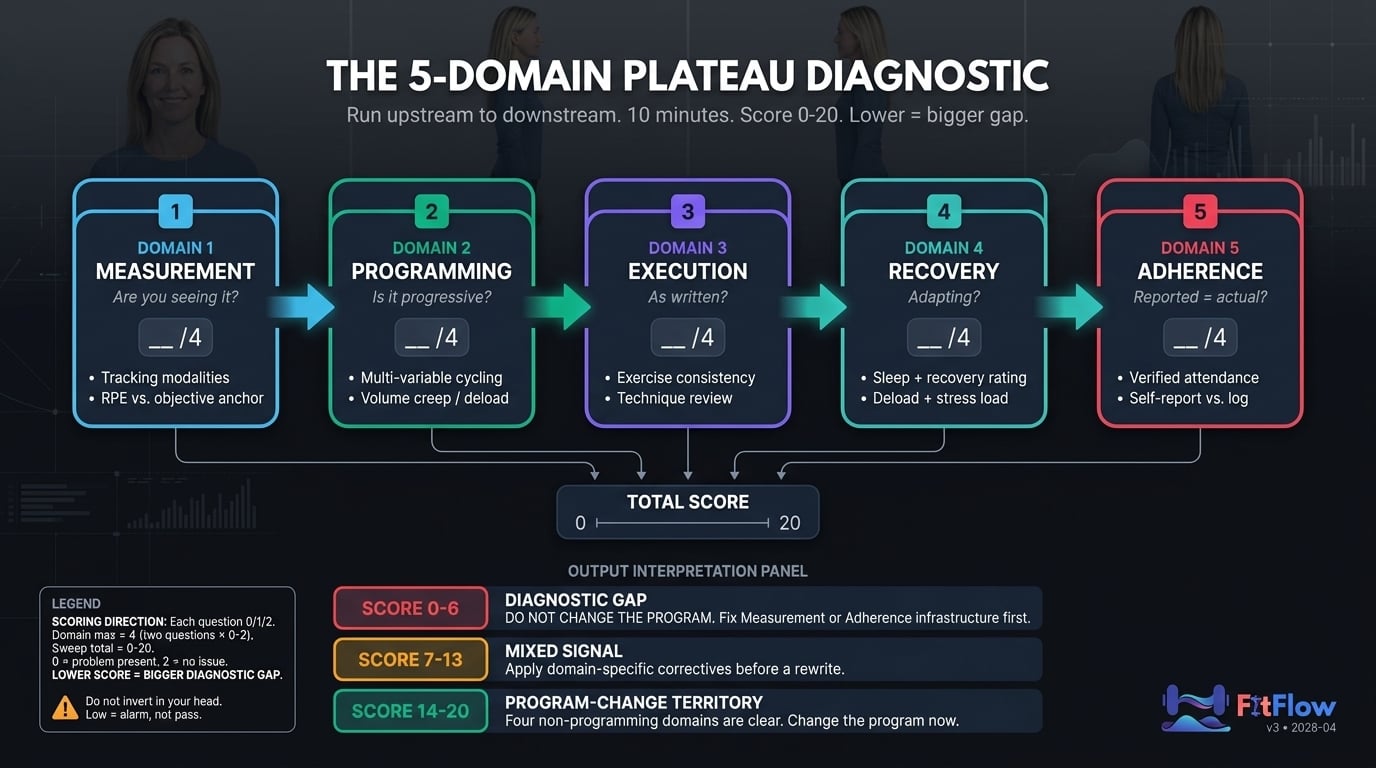

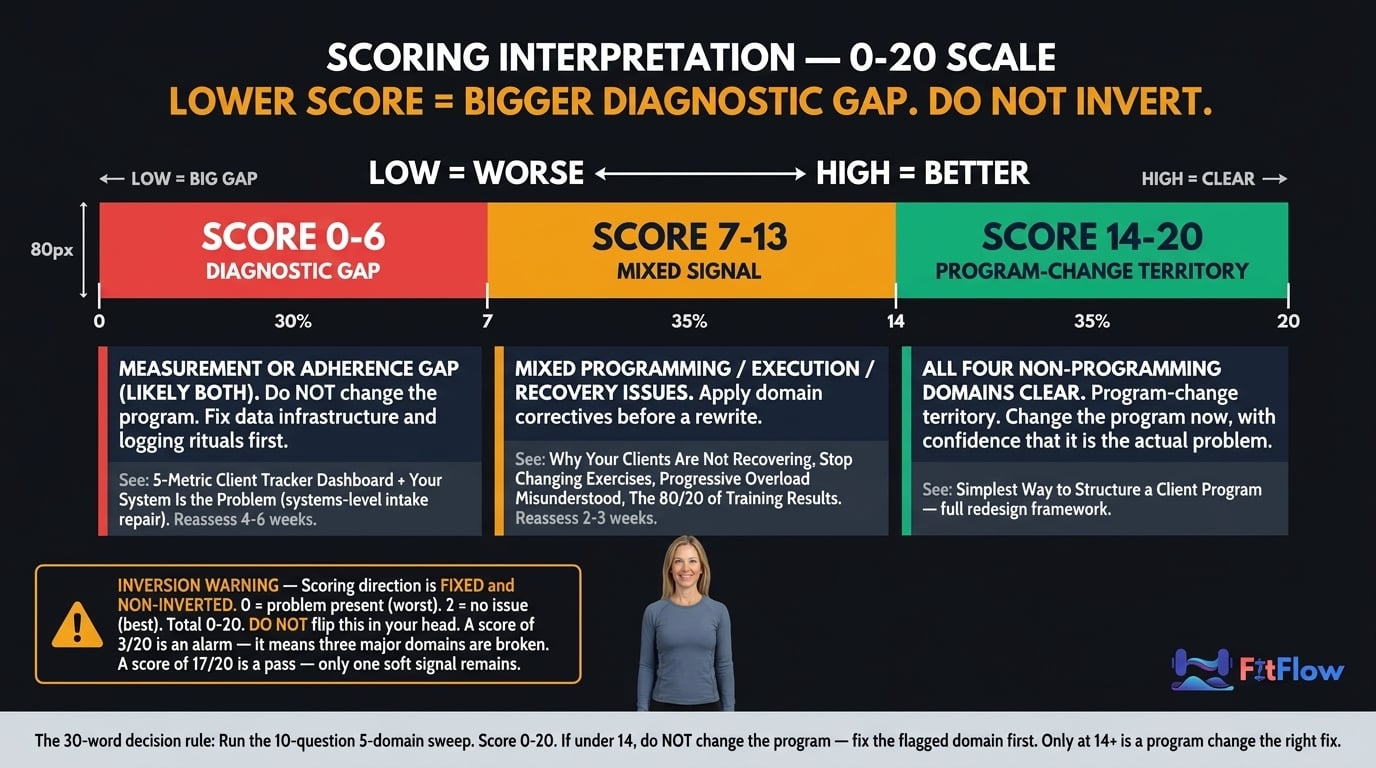

Before you change the program, run this 10-minute sweep on any plateaued client. Score each of ten questions (two per domain) on a 0/1/2 scale: 0 = problem present, 2 = no issue. Total: 0-20. Lower score = bigger diagnostic gap. Do not invert this in your head — a low score is an alarm, not a pass.

Measurement — Are you capturing progress accurately, or is the client progressing while your data stream misses it?

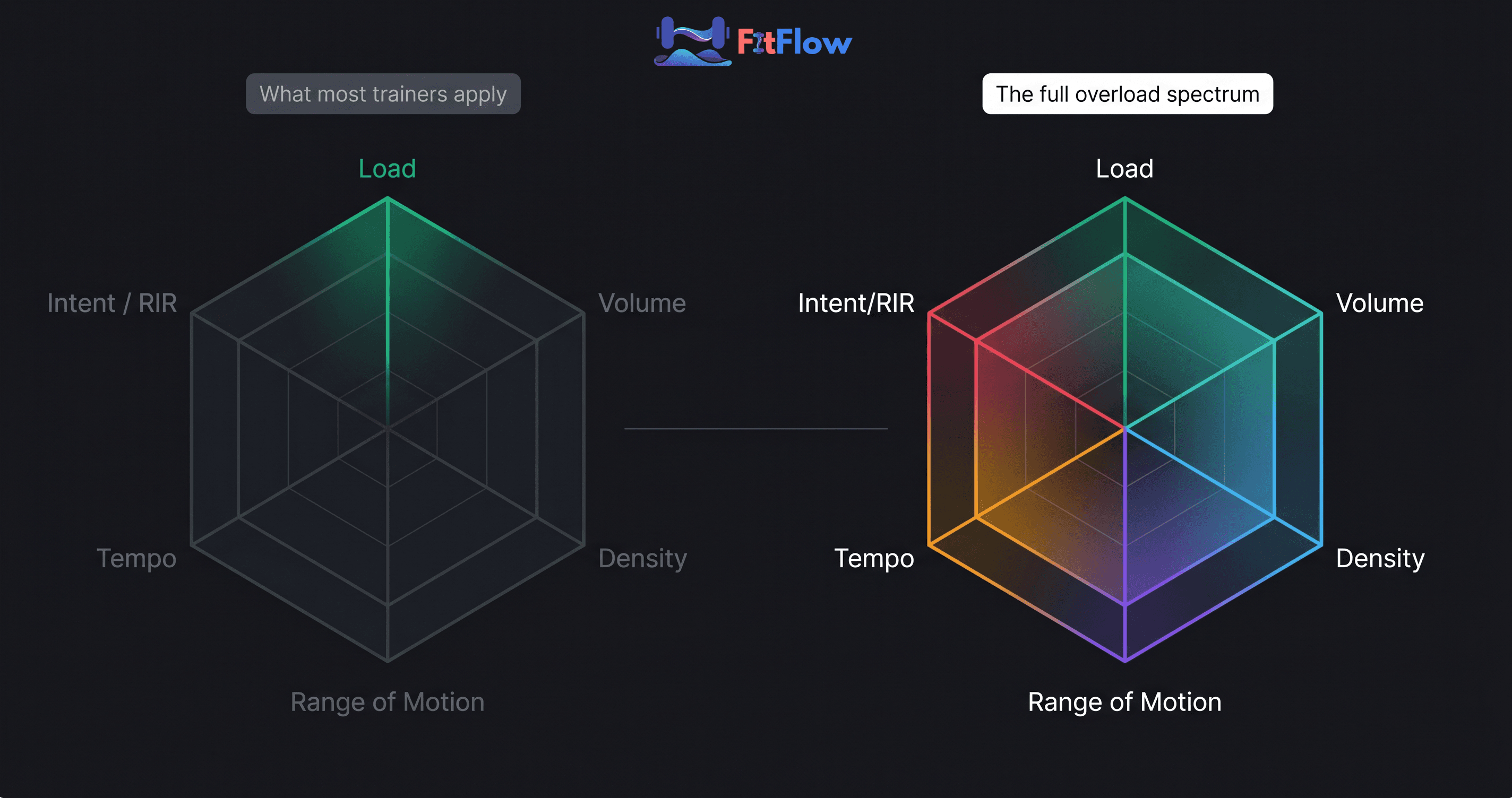

Programming — Is the program applying progressive overload across the full variable spectrum, or has one variable stalled while five others sit unused?

Execution — Is the client running the program as written, or has exercise churn and technique drift eroded the stimulus?

Recovery — Are the 163 hours outside the gym supporting adaptation, or cannibalizing it?

Adherence — Does what the client reports match what the client actually does? (Hint: rarely.)

Score 0-6: Measurement or Adherence gap. Fix upstream before touching the program. Score 7-13: Mixed Programming, Execution, or Recovery issues. Apply domain correctives. Score 14-20: Legitimate program-change territory.

Why "Change the Program" Is the Default — and Why That Is the Wrong Reflex

Trainers change the program because it feels like action. The client sees motion — a new split, a new block, a new rep scheme — the trainer performs coaching competence, and psychological discomfort with "nothing is changing" resolves inside a single conversation. Behavior that resolves discomfort is not the same as behavior that produces progress.

The math of program-hopping makes it worse. Consistency data cited in the hypertrophy failure deep-dive shows roughly 18% greater strength gains and 12% more hypertrophy from clients who complete a full block versus those who switch mid-cycle. Switching peaks at four-to-six weeks — exactly when the neural-to-muscular adaptation transition occurs and progress normally plateaus for two or three sessions before resuming. Trainers interpret the transition as program failure, switch, and restart the adaptation clock. The new program then appears to work for four to six weeks (because it is new, and the neural phase always produces the most visible gains), confirming the loop. A self-reinforcing diagnostic error.

The ACSM 2026 Resistance Training Position Stand frames progression as explicitly multi-dimensional: load, volume, frequency, exercise selection, tempo, range of motion, and density all count. If progression is multi-dimensional, plateau diagnosis must be multi-dimensional too. A single-variable diagnostic applied to a multi-variable adaptation process will misfire most of the time.

Change the program last, not first. After the other four domains have been cleared, not before.

The 5-Domain Diagnostic — How to Run It in Under Ten Minutes

The diagnostic is a single sweep across five domains, in fixed order: Measurement → Programming → Execution → Recovery → Adherence. Order matters. Each domain can invalidate findings below it. If Measurement is broken, none of the other four can be trusted — your "programming" assessment is running against a bad data stream. If Adherence is broken, every Programming finding is meaningless because the program is not actually being run.

Each of the ten questions — two per domain — is scored on a 0/1/2 scale:

0 = problem clearly present

1 = suspicion or mild signal

2 = no issue detected

Total range: 0-20. Lower score means a bigger diagnostic gap. This is counter-intuitive — most people expect higher to be better — so we inverted it intentionally. A 3/20 on a client's file reads as three alarms, not three successes. Do not flip it in your head; a low score is an alarm, not a pass.

The routing rule:

Score Band | Interpretation | Action |

|---|---|---|

0-6 | Measurement or Adherence gap — often both. | Do not change the program. Repair the data and adherence infrastructure first. Reassess in 4-6 weeks. |

7-13 | Mixed Programming, Execution, or Recovery issues. | Apply the domain corrective. Reassess in 2-3 weeks. |

14-20 | Four non-programming domains clear. | Legitimate program-change territory. Rewrite with confidence. |

The five H2s below walk through each domain in turn. Each ends with its two checklist questions, the evidence behind them, and the FitFlow post you route to when the domain flags.

Domain 1 — Measurement: Are You Actually Capturing Progress?

Diagnostic question: Are you measuring the right things, frequently enough, with enough precision, to detect progression or stall?

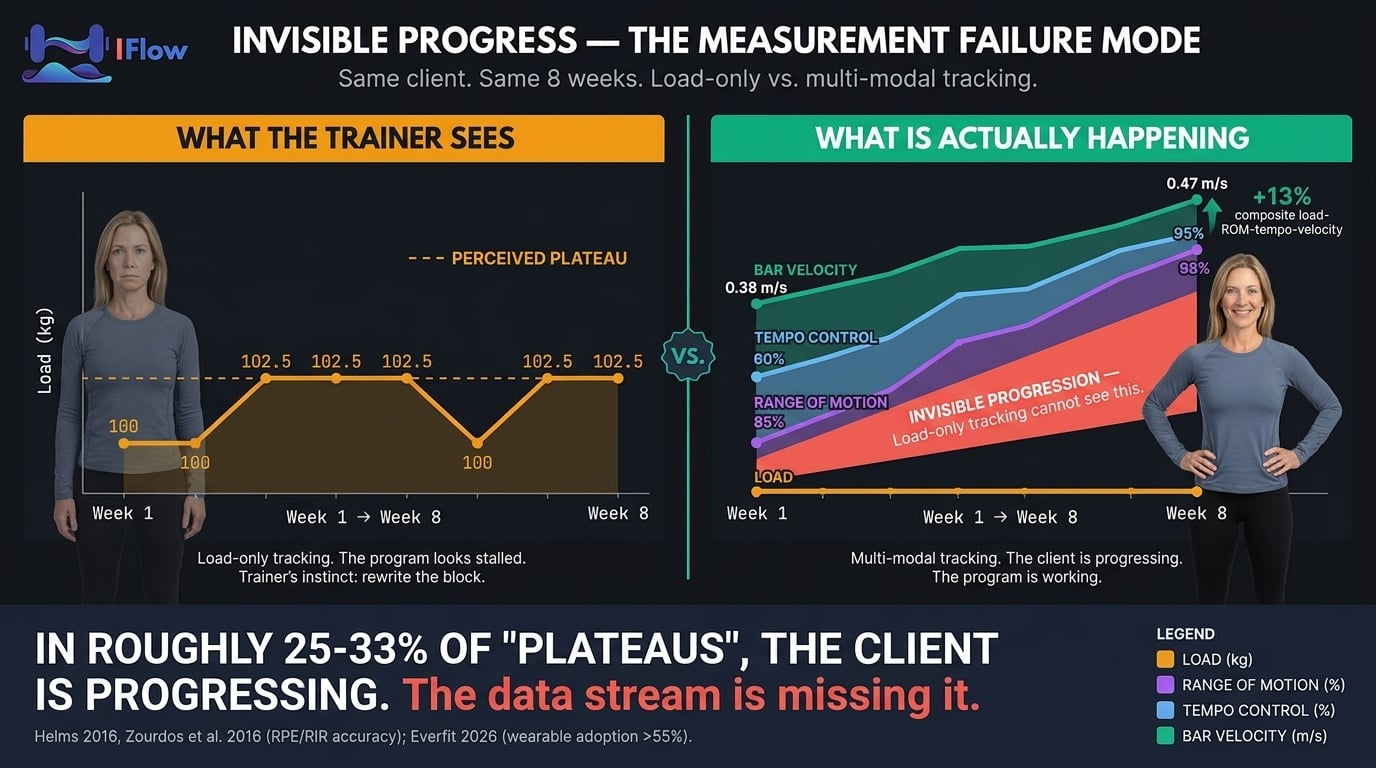

You cannot diagnose a plateau you cannot see. In roughly a quarter to a third of the cases we have triaged, "the client is not progressing" means "the client is progressing, and the data stream is missing it." That is a measurement failure — not a client failure or a program failure — and it is the cheapest one to fix.

Everfit's 2026 Online Personal Training Trends reports wearable integration exceeds 55% in fitness studios and roughly half of U.S. adults own a fitness tracker. Most trainers now have HRV, sleep, and readiness data they are not reading. The question is whether you are converting it into programming decisions on a two-week cadence.

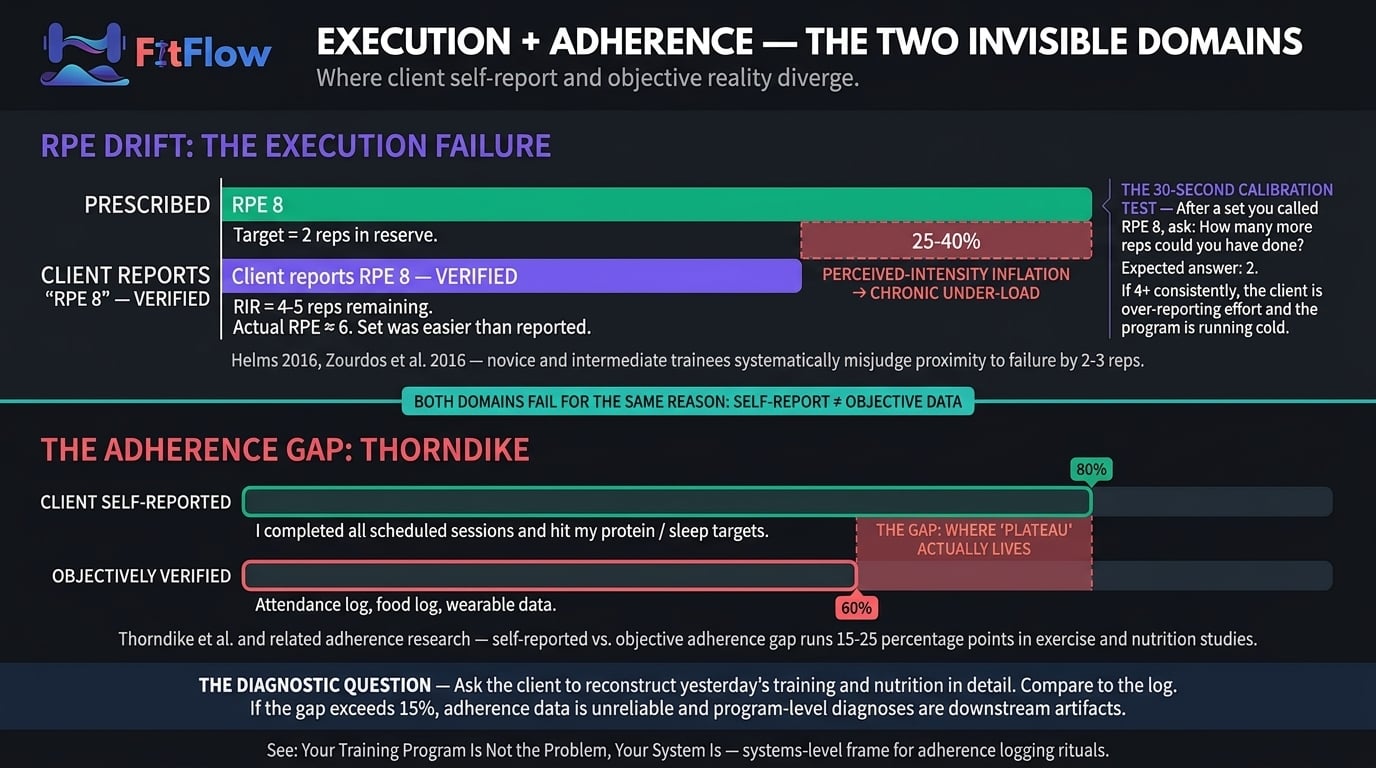

The harder problem is intensity measurement. Most trainers anchor intensity to client-reported RPE, which has a known systematic bias.

The RPE Drift Trap

RPE drift is the chronic over-reporting of effort by novice and intermediate trainees — typically 2-3 reps of over-estimated proximity to failure. Meta-analytic work by Helms on RPE/RIR accuracy, building on Zourdos et al. (2016), shows untrained raters systematically misjudge true proximity to failure — most often perceiving sets as closer to failure than they actually are. If your intensity data is 100% client-reported RPE, perceived intensity is inflated by 25-40% on paper — you are chronically under-loading in reality. Your client does not have a plateau; your intensity instrument does.

The thirty-second calibration test: after any set prescribed at RPE 8, ask "how many more reps could you have done?" The answer should be 2. If it is consistently 4 or more across sessions and lifts, the client is over-reporting effort by at least two RPE points and programming is running cold. For the mechanism treatment, see why progressive overload is misunderstood.

The complementary solution is velocity-based feedback. VBT research synthesized by Weakley et al. (2021) validates bar-speed as an objective, real-time proxy for proximity to failure that does not drift. You do not need a linear position transducer on every client; a phone-mounted app or a simple first-to-last-rep velocity-loss observation catches the gap. Any objective anchor — verified load-at-target-reps, bar-speed loss, or RIR calibrated over 4+ weeks — is sufficient. The failure mode is relying on raw RPE alone.

The second leg of Measurement is tracking infrastructure: capturing at least two progression modalities (load, reps, volume, body composition, girths, subjective markers) at least every two weeks. If the answer is "I track whatever shows up in the session note," the domain will score low regardless of program quality. The 5-metric dashboard we built for exactly this problem is the minimum infrastructure; any equivalent biweekly two-modality capture clears the domain.

Measurement — The Two Checklist Questions

"Are you capturing measurable progression data in at least two modalities (load, reps, volume, body composition, girths, subjective markers) at least every 2 weeks?"

2 = Two or more modalities tracked every 2 weeks or more frequently.

1 = One modality tracked, OR monthly but incomplete.

0 = No systematic tracking. Stop the diagnostic; fix the infrastructure first.

"Is your intensity measurement anchored to something objective (load at target reps, bar-speed tracking, or RIR calibrated over 4+ weeks), or is it 100% client-reported RPE?"

2 = Anchored to objective (load + reps at target; video / VBT; calibrated RIR).

1 = Mixed (RPE plus occasional objective anchor).

0 = 100% client-reported RPE. RPE drift is likely inflating intensity 25-40%.

If both clear, Measurement is clean — move to Programming. If either scores 0, stop diagnosing downstream. Until you can see the client accurately, every Programming, Execution, Recovery, and Adherence finding is a downstream artifact. Do not change the program from a Measurement failure; fix the sensor first.

Domain 2 — Programming: Is the Program Actually Progressive?

Diagnostic question: Is the program applying progressive overload across the full variable spectrum, or is it stuck on one dimension that has plateaued?

The most common Programming failure is single-variable application. "Add weight every session" is one dimension of a six-dimension spectrum: load, volume, frequency, exercise selection, tempo, range of motion. When one stalls (and it will, for every intermediate and advanced trainee), five others remain. Trainers operating on one dimension are applying linear progression, not progressive overload — and linear progression expires. The full framework is in progressive overload, misunderstood; this domain checks whether it has been applied in the last eight weeks or has silently collapsed to load-only.

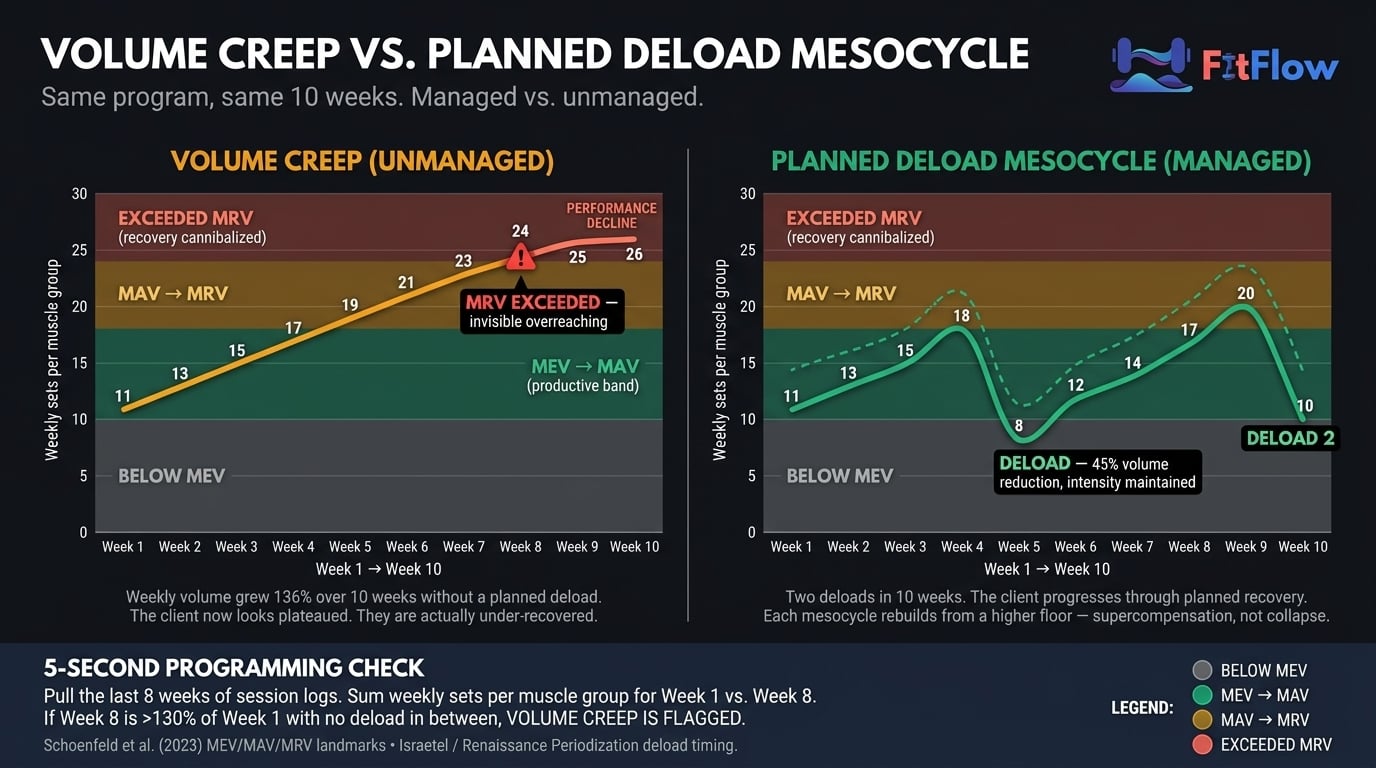

Volume Creep — The Silent Programming Failure

The second common failure is volume creep: unplanned accumulation of weekly sets as trainers react to plateau by adding work. Schoenfeld's volume-landmark research (MEV, MAV, MRV) establishes that plateau at MRV behaves completely differently from plateau at MEV and requires the opposite intervention. MRV plateau presents with rising fatigue, elevated soreness, and declining session quality — fix with a deload and a volume reduction to MAV. MEV plateau presents with normal fatigue and clean session quality — fix with a volume increase toward MAV. The wrong intervention makes things worse.

The deload-cadence lens is Israetel's Renaissance Periodization mesocycle model: most clients need a planned deload every 4-6 weeks, scheduled before the plateau. Mind Pump's April 2026 Minimum Effective Dose episode is the practitioner-audience version: one or two well-dosed sessions per week often outperform five or six mediocre ones, because the former respects MRV. If your client has accumulated thirty-per-cent-plus weekly volume over the block with no deload in sight, the Programming domain flags — and a rewrite is almost certainly the wrong response. The right response is scheduling the deload they needed four weeks ago. For variable prioritization, see the 80/20 of training results.

Programming — The Two Checklist Questions

"Has the program cycled through at least two progressive-overload variables (load, volume, density, range of motion, tempo, RIR) in the last 8 weeks, OR does it rely only on 'add weight every session'?"

2 = Two or more variables cycled deliberately.

1 = One variable cycled plus one attempted.

0 = Single-variable (load-only) progression. Linear progression has expired.

"Has weekly volume increased by more than 30% over the last training block WITHOUT a planned deload, OR has a deload been implemented in the last 6 weeks?"

2 = Deload within 6 weeks, OR weekly volume within 30% of block baseline.

1 = Deload scheduled but not yet run, OR volume creep in 20-30% range.

0 = Volume crept >30% with no deload. MRV volume creep is one of the most common and least diagnosed Programming failures.

If both clear, Programming is clean — move to Execution. If either scores 0, apply the corrective (variable rotation or scheduled deload) and reassess in 2-3 weeks before a rewrite. A rewrite layered on single-variable progression or MRV creep produces more redesign than necessary and resets adaptation that was still salvageable.

Domain 3 — Execution: Is the Client Performing the Program as Written?

Diagnostic question: Is the program being executed as designed, or has exercise churn, technique drift, or tempo abandonment eroded the stimulus?

A well-designed program executed inconsistently is indistinguishable from a poorly designed program executed perfectly. Three failure modes account for nearly all Execution plateaus:

Exercise churn — the client or trainer substitutes across sessions. "I did not feel like bench today, we did incline." "The rack was busy, we did dumbbell press." Each substitution resets skill-specific neural adaptation and stimulus-specific mechanical tension. The full corrective is in stop changing exercises, fix this instead, documenting roughly 18% strength loss over a block that churns primary movements weekly. For the scaling trainer, churn is almost always the trainer's failure mode — rack availability or comfort-avoidance that looks trivial and is not.

Technique drift — the pattern degrades across the block. Squat depth visibly shortens across weeks, the bar drifts forward on bench, tempo collapses on the eccentric. The written load is the same but the actual exercise is now an easier variant. Video catches this in sixty seconds; live observation misses it more often than trainers admit.

Tempo abandonment — prescribed tempos (3-1-1-0, paused reps, two-second eccentrics) get dropped for "just moving the weight." Time-under-tension and eccentric control disappear silently; what looks on paper like the same program becomes a lower-quality stimulus.

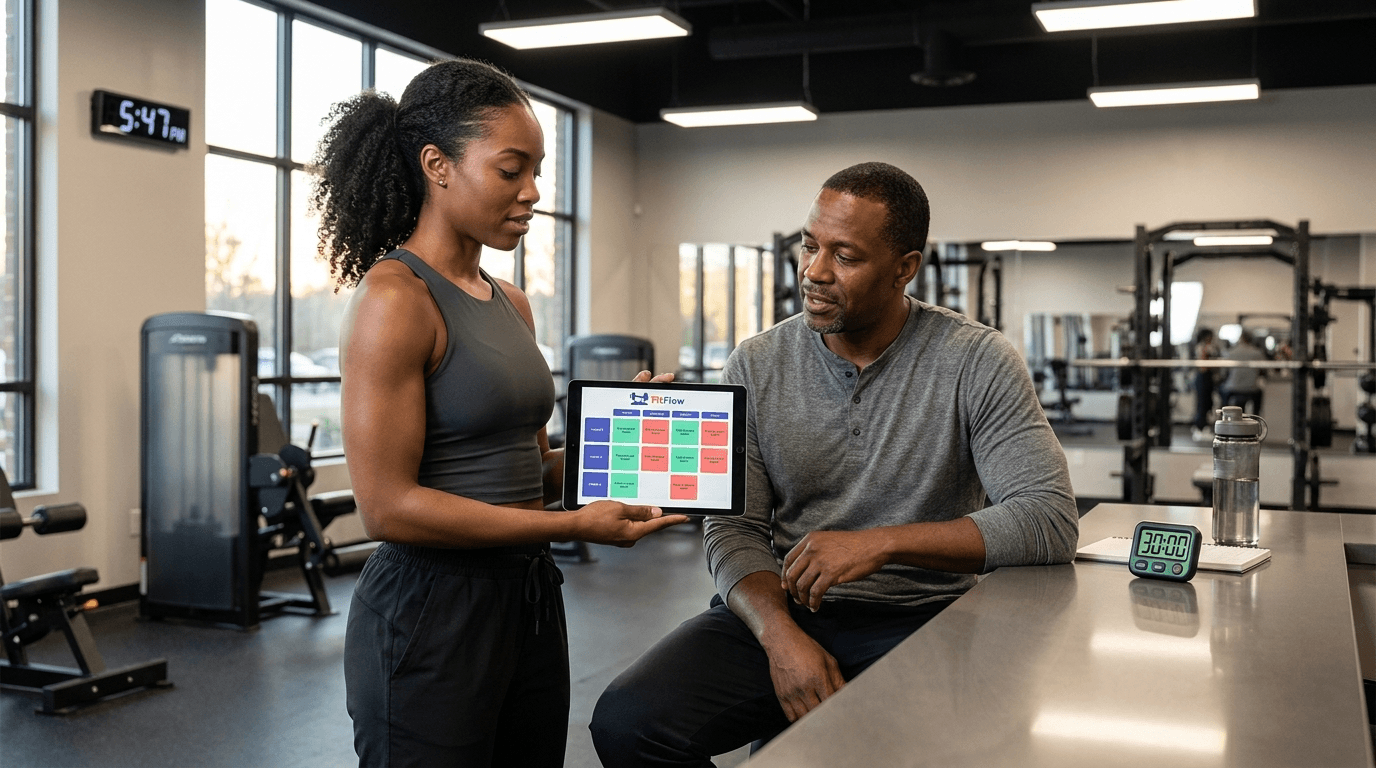

Execution failure in a high-roster practice is rarely a willpower problem; it is almost always a delivery-system problem — an intake-and-check-in ritual not built to catch substitutions and drift at scale. That is the argument of your training program is not the problem, your system is, the most important cross-link for this domain when you manage fifteen-plus clients.

Execution — The Two Checklist Questions

"Has the client executed the same core lifts with ≤1 exercise substitution over the last 6 weeks?"

2 = Program executed as written with minimal substitution.

1 = 2-3 substitutions, no pattern.

0 = 4+ substitutions or regular exercise churn.

"Have you reviewed the client's technique (video or live) on primary lifts in the last 4 weeks, and is it within spec (depth, bar path, tempo)?"

2 = Video-verified within 4 weeks, technique within spec.

1 = Live-observed only; minor drift noted.

0 = No technique review in 4+ weeks, OR verified drift exists.

If both clear, Execution is clean — move to Recovery. If either scores 0, churn or drift is the plateau, not the program.

Domain 4 — Recovery: Is the 163 Hours Supporting Adaptation, or Cannibalizing It?

Diagnostic question: Is the client recovering enough between sessions for the training stimulus to produce adaptation, or is cumulative fatigue and allostatic load blocking it?

Training produces stimulus. Recovery produces adaptation. If the client is not recovering, the stimulus does not convert into progress — the plateau is a recovery failure wearing a programming-failure mask. Recovery is the most cross-cited cause of "plateau" in the evidence-based literature. This is why Recovery has its own deep-dive as the direct sibling of this post: why your clients are not recovering documents the five hidden barriers (sleep architecture, allostatic load, nutrition myths, deload deprivation, wearable blindness). This domain is deliberately shorter because the deep-dive exists; your job here is to flag whether Recovery is a probable cause and route to the full audit.

A note on scope. Recovery inputs in this domain are behavioral markers a trainer can observe and act on — prescribed training load, sleep duration the client reports, subjective recovery ratings, and planned deload cadence. They are not clinical assessments. If a client reports persistent sleep disruption, unexplained fatigue beyond training context, or mood changes, that is a referral to a physician or registered professional, not a trainer intervention.

Two anchors. First, the April 2026 WHOOP / Nature Communications study — 4.3 million nights across 14,689 participants — shows high-intensity exercise within four hours of bedtime delays sleep onset by thirty-six minutes, reduces total sleep by twenty-two minutes, and produces a 32.6% decrease in overnight HRV. Most clients training at 6-8 PM are systematically shorting recovery. Second, the allostatic-load framework — originally formalized by McEwen and Stellar (1993) and applied to exercise contexts in recent Frontiers in Physiology work (2025) — establishes that the body does not categorize stress by source. A client's work restructure, their six-month-old's sleep regression, and their deadlift PR compete for the same finite recovery budget.

Note the overlap with Programming: when the volume-creep check flags and this domain flags, confidence in a Recovery-Programming interaction rises sharply. Two views of the same failure — volume exceeding what current recovery capacity can convert into adaptation. Fix is the same from either direction: deload, reduce working volume toward MAV, reassess in 2-3 weeks before concluding the program needs a rewrite.

Recovery — The Two Checklist Questions

"In the last 4 weeks, has the client reported average sleep duration ≥7 hours AND subjective recovery rating ≥6/10?"

2 = Both criteria met.

1 = One met; the other borderline.

0 = No. Recovery capacity is compromised; do not diagnose Programming until addressed.

"Has the client had a planned deload or reduced-volume week in the last 6 weeks, OR does their stress load (work, family, life events) appear stable or declining?"

2 = Deload completed or stress stable/declining.

1 = Deload overdue but planned; stress moderate.

0 = No deload in 6+ weeks AND elevated stress. Recovery deficit is a high-probability plateau cause.

If both clear, Recovery is clean — move to Adherence. If either scores 0, defer Programming changes, implement a structured deload, and run the five-barrier recovery audit before concluding the program is the problem.

Domain 5 — Adherence: Does What the Client Reports Match What the Client Actually Does?

Diagnostic question: Is the program being executed as the client reports it, or is there a gap between self-reported adherence and actual execution?

This domain is the hardest to diagnose because it depends on the data the client is least reliable about. The Thorndike et al. self-report validation work and subsequent exercise-adherence research shows self-reported adherence runs 15-25 percentage points higher than objective measurement. A client who says they completed twelve sessions often shows nine on the attendance log; a diary reporting 150 g protein often reconciles against a 110 g 24-hour recall. The gap between reported and actual is where a non-trivial share of "plateaus" lives.

A brief note on framing. A prior FitFlow post — your training program is not the problem, your system is — argues as an editorial thesis that the system is the root cause. This diagnostic treats the system as one of five domains to assess in sequence. Both frames hold at different levels: Adherence is one fifth of the diagnostic, and when it flags, the systems fix is usually the corrective. That post is the editorial argument; this one is the diagnostic tool.

Two failure modes dominate:

Attendance drift — the client completes 65-80% of scheduled sessions over an eight-week block while self-reporting 90%+. Program dose is 65-80% of prescribed. A rewrite does not fix attendance. The behavioral analog is why perfect diets fail, which applies the same dynamics to nutrition.

Log-report divergence — the client's description of yesterday does not match the log. Often the log is aspirational (what the client intended), not descriptive (what happened). A logging-ritual failure, not a motivation problem, repaired by restructuring intake and check-in — treated in programming for busy clients when the constraint is client time.

Trainerize 2026 data connects the domain to retention: when clients cannot see progress — because Measurement is broken, or because Adherence is low enough there is not much progress to see — engagement declines silently and churn follows at ninety days. This is where the diagnostic crosses from training science into retention economics.

Adherence — The Two Checklist Questions

"Has the client completed ≥90% of scheduled sessions in the last 8 weeks (verified by attendance log, not self-report)?"

2 = ≥90% attendance verified.

1 = 75-90% attendance; pattern acceptable.

0 = <75% or unverifiable. Program not running at prescribed dose; program change will not fix attendance.

"When you ask the client to describe yesterday's training and nutrition in detail, does their description align with what their log shows (within ~15%)?"

2 = Close alignment; client is self-aware.

1 = Moderate gap (15-25%); common, recoverable.

0 = Large gap (>25%) or client cannot reconstruct yesterday. Repair intake and logging before trusting any programming diagnosis.

If both clear, Adherence is clean — the sweep is complete. If either scores 0, repair intake and logging before changing the program. Running a new program on a client with sub-75% attendance or >25% self-report gap is programming in the dark.

Scoring, Interpretation, and the Program-Change Decision Rule

Sum the ten question scores for a total in the 0-20 range.

One more statement of the convention, because the inversion risk is real:

Each question scored 0, 1, or 2:

0 = problem clearly present (worst — diagnostic gap)

1 = suspicion or mild signal

2 = no issue detected (best)

Total: 0-20. Lower score = bigger diagnostic gap. A 3/20 means three domains are broken; a 17/20 means one soft signal remains. Do not flip the direction — low scores are alarms, not passes.

Routing:

Score Band | Interpretation | Action |

|---|---|---|

0-6 | Measurement or Adherence gap — likely both. | Do not change the program. Fix data and adherence infrastructure first. Reassess in 4-6 weeks. |

7-13 | Mixed Programming, Execution, or Recovery issues. | Apply the specific domain corrective. Reassess in 2-3 weeks. |

14-20 | All four non-programming domains clear. | Legitimate program-change territory. Rewrite now, with confidence. |

The decision rule as a single sentence: Run the ten-question five-domain sweep, score 0-20; if the total is under 14, do not change the program — fix the flagged domain first. Only at 14 or above is a program change the right fix.

Grab the scorable version. The Client Plateau Diagnostic Checklist packages this exact system into a printable one-pager with the interpretation flowchart, a client-facing 5-question version for in-session use, and the routing map to each domain's corrective. Download it and run it on your next plateaued client before your next session.

This diagnostic is intentionally narrower than the Barbell Medicine lifter-facing 7-point plateau guide updated March 7, 2026. Their tool is a lifter self-diagnostic, treating each item as parallel; this is its trainer-facing counterpart — five causally ordered domains with explicit routing and a scoring rubric their flat checklist does not provide. If your client is an experienced lifter comfortable self-auditing, their tool is right; if you are the trainer triaging a roster, this one is. Complementary, not redundant.

Stop Guessing. Diagnose First, Then Decide.

Plateau is not random. It has predictable causes, and in most cases they live in one of four domains that have nothing to do with program design. The 5-domain diagnostic gives you the routing. From there the work is domain-specific: fix Measurement with tracking infrastructure, Programming with variable rotation or a scheduled deload, Execution with a churn-and-technique audit, Recovery with the five-barrier deep-dive, Adherence with a systems-level intake repair.

"Good programming" is no longer the 2026 differentiator. The differentiator is diagnostic depth — the trainer who can accurately localize a stall across five domains is the one more likely to retain twelve-month clients while peers lose rosters at the ninety-day mark the Trainerize data describes. ACSM 2026, Trainerize 2026, Les Mills 2026, and the Barbell Medicine March update all point the same direction: diagnosis first, intervention second.

The asymmetry is final: the wrong fix applied quickly produces zero progress and a wasted block. The right fix applied slowly — even if it takes ten extra minutes and a hard conversation about attendance or sleep — produces progress. Take the ten minutes. Run the diagnostic. Change the program last. When you do rewrite, do it against a return-to-baseline structure simple enough to execute cleanly — the structure we published for exactly this purpose is where most redesigns should start.

Related diagnostic. If your 5-domain sweep flagged Recovery, the next post to read is Why Your Clients Are Not Recovering — the companion diagnostic walking the five hidden barriers (sleep architecture, allostatic load, nutrition myths, deload deprivation, wearable blindness) every trainer misses. This diagnostic routes into it, and most Recovery-domain flags find their fix there.

Download the Client Plateau Diagnostic Checklist. Ten questions, five domains, under ten minutes per client. Includes the scoring flowchart, three-band interpretation thresholds, a printable one-pager to clip to the client's file, and a simplified five-question client-facing version for in-session use. Scoring is non-inverted — lower score = bigger diagnostic gap. Run it before your next rewrite; in most cases the answer will surprise you, and the ten minutes will save you the four hours you were about to spend on a rewrite the client did not need.

Frequently Asked Questions

Comments